Keep your finger on the pulse with QUA: a pulse-level quantum programming language

“So, it’s basically a programmable AWG, right?”

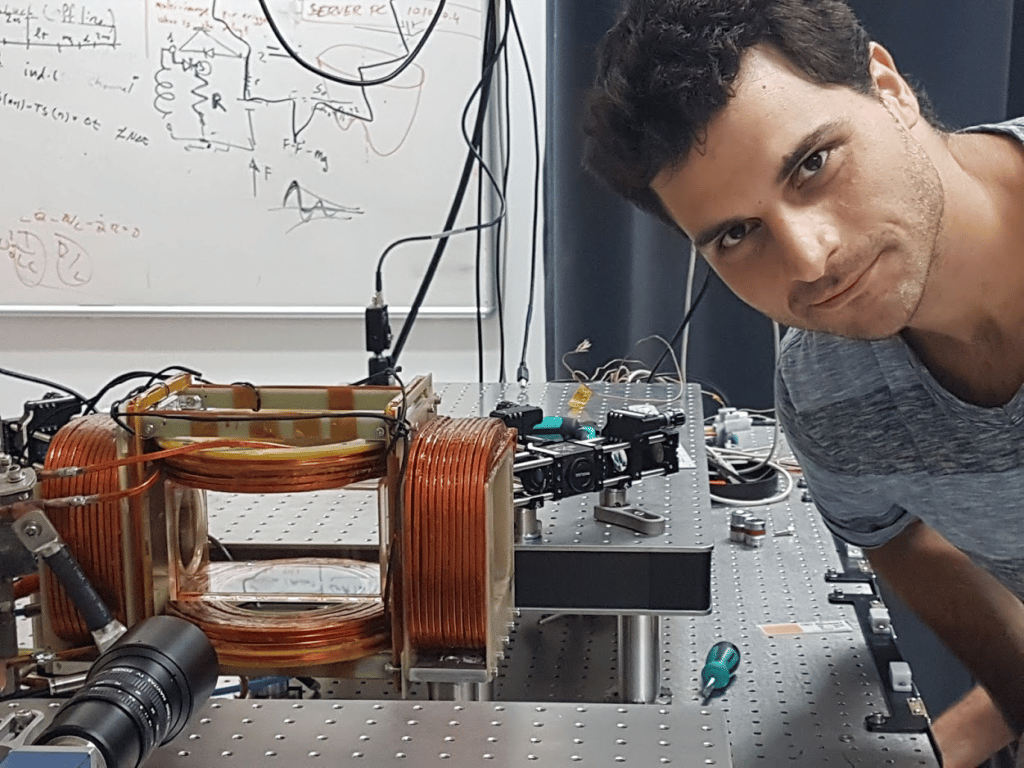

That was my immediate thought when I came across Quantum Machines for the first time. I was still a PhD student in Quantum Photonics at the time, working on generating cold Rydberg atoms in my leaky vacuum chamber. I started thinking about what comes after graduating, and it seemed like I wanted to put academia behind me for now. However, I wasn’t looking to step away from physics. I still loved it and wanted to keep working on physics problems, especially on experimental quantum mechanics, if I could. I then decided to contact Yonatan Cohen, one of the co-founders of QM whom I kind of knew from the train commute from Tel-Aviv to the Weizmann Institute. I have since graduated, have come aboard QM, and learned that QUA and the OPX quantum controller, is a far cry from anything I’ve worked with before.

What is QUA?

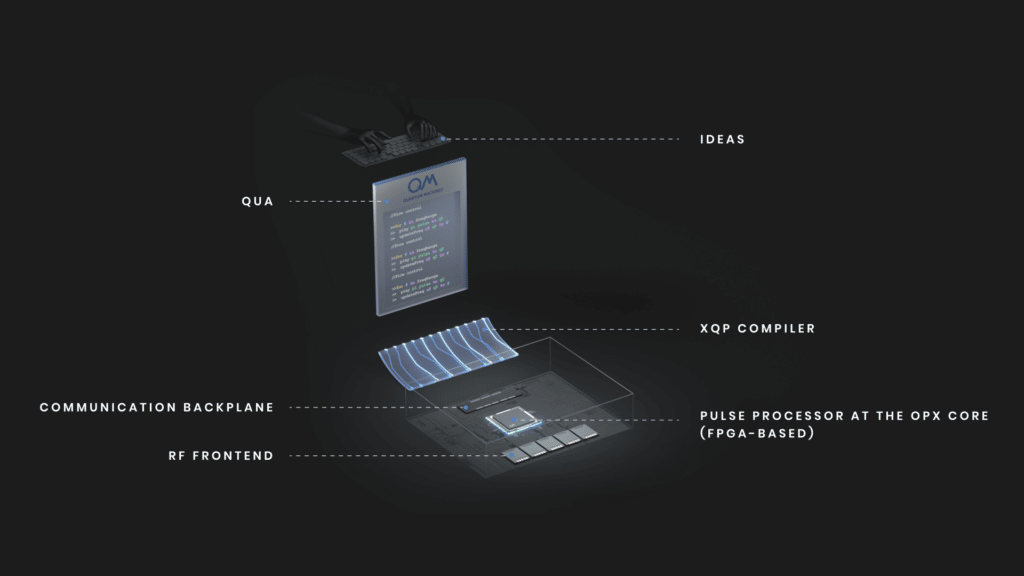

What’s so unusual about it? In what way is this quantum control platform different from an AWG with a digitizer or whatever? To me, the key concept to grasp was that QUA is a procedural programming language that runs on real-time hardware. It’s not Python, nor is it Matlab or C. It’s a full-featured programming language, with its own data types and flow control, which allows you to intuitively program and produce any sequence you can imagine.

QUA programs are compiled to a representation suitable for the control of dedicated hardware. When it runs on that hardware, it runs with deterministic timing, meaning you know exactly what is going to happen, down to the nanosecond. The kicker is that you can have your QUA programs generate control signals, perform measurements, process those measurements in non-trivial ways, and define how they respond using flow control. So you have measurements, computations, and decisions running in real-time.

What is the OPX controller?

As compelling as the idea of a programmable control system is, it isn’t actually novel. Plenty of experimentalists, myself included, had developed FPGA-based solutions to control their quantum experiments. It is a labor-intensive process for most experimentalists (if they don’t already happen to be digital electronics wizards), and it is difficult to maintain once the original developer moves on from the lab. Still, it’s been done time and again in labs around the world. However, the OPX is something much more than a professionally implemented solution to this problem.

Previously, I mentioned that QUA is compiled to a “representation suitable for control of dedicated hardware” but I didn’t explain what that meant. When C code is compiled, a set of instructions targeting a specific processor architecture is generated. That list of instructions is slightly different based on whether you’re running code on the ARM-based processor on your phone or the x86 chip on your laptop. The QOP has a new kind of general-purpose pulse processor that is designed specifically for this task. This processor is optimized to synthesize the complex and high bandwidth waveforms required to control qubits in real-time. It can virtually eliminate waveform upload times and dramatically accelerate experimental throughput. Long upload times present a common problem which in many experiments can turn parameter sweeps and averaging loops into a frustratingly slow exercise and can sometimes even be blockers for certain types of operations.

Going with the (experimental) flow

In addition to higher throughput, incorporating feedback into real-time signal synthesis opens up some powerful options for most qubit platforms, from superconducting to AMO, to photonic quantum computing, NV center qubits for sensing (read more on our Optically Addressable Qubits page) and more. Building on this, the QOP provides real-time signal processing, which empowers the feedback process immensely.

One of the neat ways this comes about is performing weighted de-modulation of input signals on hardware and in real-time. If you think about it, this is immensely powerful. It allows you to lower the information content of a high bandwidth signal, thereby doing things like estimating the state of a superconducting or NV center qubit on the fly. But it also allows you to implement pretty flexible real-time filters. If you only perform weighting and no de-modulation, you can integrate the incoming for a kind-of real-time averaging.

Demodulation, integration, and event time-tagging (check out the new high-res time tagging feature of QUA2.0) of the digital signal coming from a single-photon detector are all implemented so you can collect processed, rather than raw, data. If nothing else, it prevents the collection of huge amounts of data for post-processing, further speeding up the experimental flow. The language gives you tools for further dimensionality reduction with near-real-time manipulation of the acquired data. Including things like averaging, reshaping, and performing mathematical operations.

Let’s get a(QUA)inted

So what does QUA actually look like? It’s pretty straightforward. With QUA, you write your pulse-level code in pretty much the same way as you would describe the experiment to someone. For example, take a look at this almost full Ramsey experiment.

with for_(n,0,n<N_avg,n+1):

with for_(freq,60,freq<70,freq+1):

update_frequency('qubit',freq*1e6)

with for_(d,10,d<1000,d+10):

play('pi_pulse' * amp(0.5), 'qubit')

wait(d, 'qubit')

play('pi_pulse' * amp(-0.5), 'qubit')

align('qubit', 'output')

measure('pulse','output', demod.full('x',I))The first loop iterates N_avg times for each loop iteration, you update_frequency to the new value you want to measure, and then play the Ramsey 𝝅 – delay – 𝝅 sequence in the three lines that follow. The value sets the delay duration in the inner-most loop. The final step is to wait until the control pulses are over (that’s what the align statement does) and then perform a measurement. In this case, the measure-statement is used to perform a weighted demodulation, but you can just as easily use it in the other ways I described above.

And that’s about it! No repeated upload of waveforms to a waveform generator, no need to synthesize the waveform yourself, and everything is clear, expressive, and deterministic. Pretty nice, isn’t it?

This is the way: on the fly waveforms

If you look closely, there’s a significant consequence to the fact that we are synthesizing waveforms on the fly with the QOP, rather than uploading them. When you upload, you often end up repeating the experiments multiple times for each set of independent variables like amplitude, frequency, etc. After all, you’ve already uploaded the waveform and don’t want to “pay” the cost of uploading it again later. In this mode of operation, you end up not averaging out the low-frequency noise.

It’s much better to have the averaging loop as the outermost loop, which averages the slow-moving stuff right out across your entire data set. You get this for “free” when you don’t have to “pay” for waveform upload.

There are really a ton of features hiding here, and I think what finally convinced me that this isn’t just another AWG, was helping clients implement their experimental workflows. It brings the ease of a Python Read-Evaluate-Print Loop to the tangled, beautiful mess of wires that is the “factory floor” of quantum mechanics.

How did this not exist when I was a PhD student?